At Computer Aid we attend events from all aspects of the IT world, and this year, in particular, there has been a focus on gender equality and diversifying the speakers who are booked. This even came to a head at the Public Sector ICT summit where Kit Collingwood, Deputy Director Universal Credit, Department for Work and Pensions brought up the issue that she was the only woman booked on the panel and made the firm statement she wouldn't attend an event again where she was the only woman on the panel.

Just that morning while networking with fellow attendees, I was informed that there was no need for there to be the Women in Information Technology discussion later in the day as "It's nothing new is it, women have been in tech for ages". He obviously hadn't read the statistic accompanying the title of the talk, that only 17% of employees in UK tech sector are women.

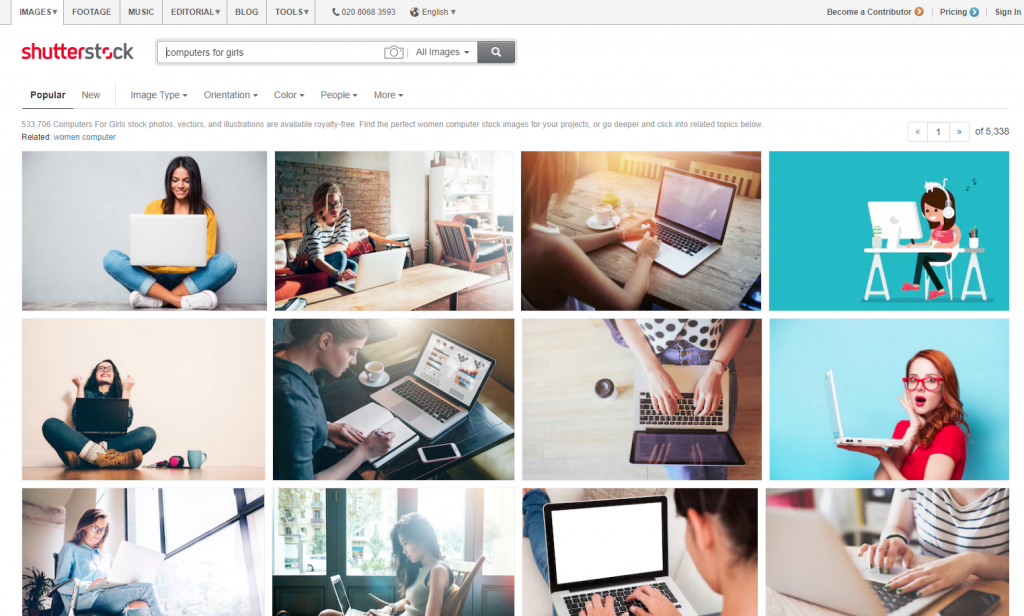

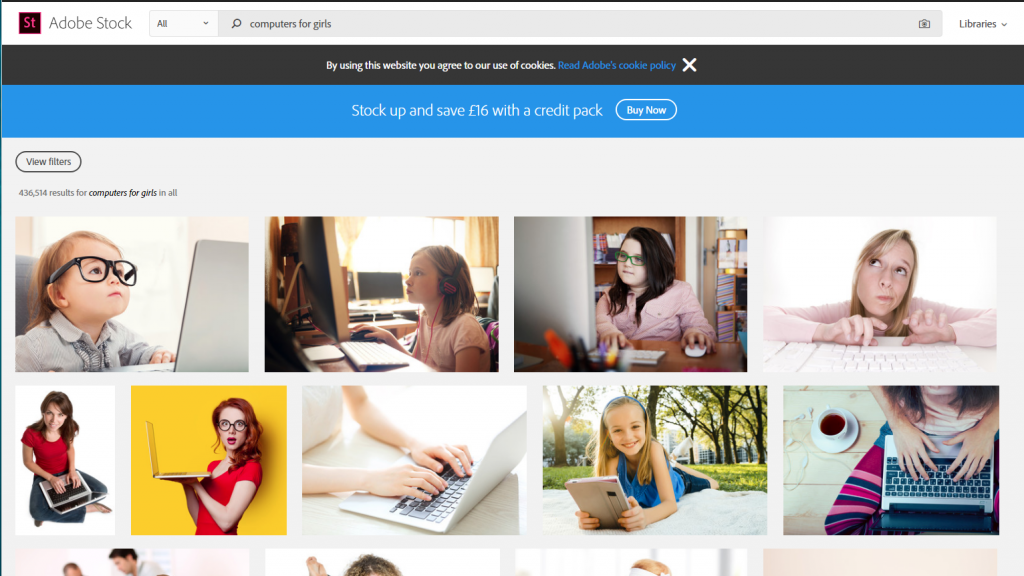

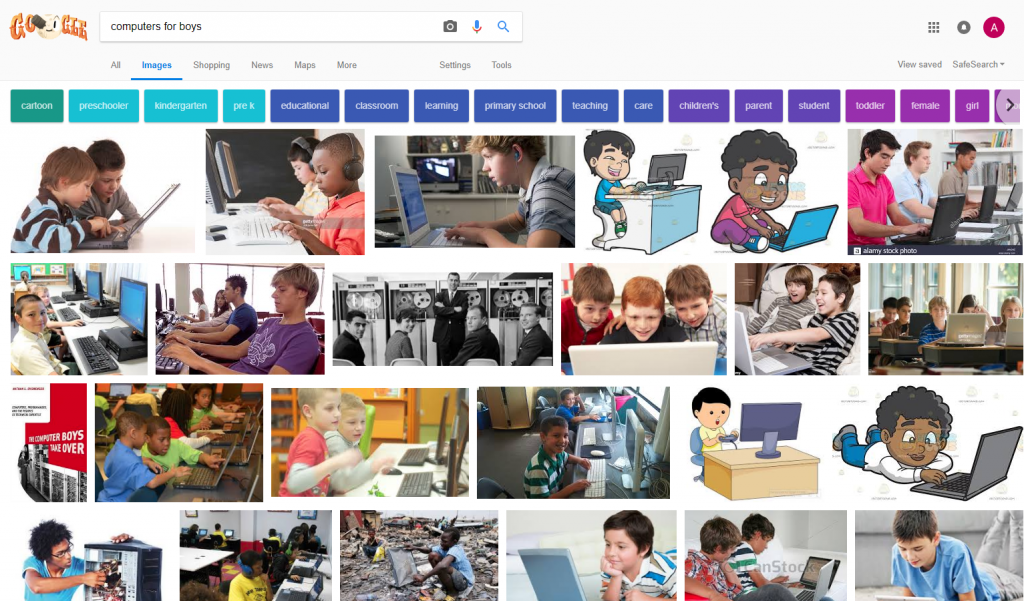

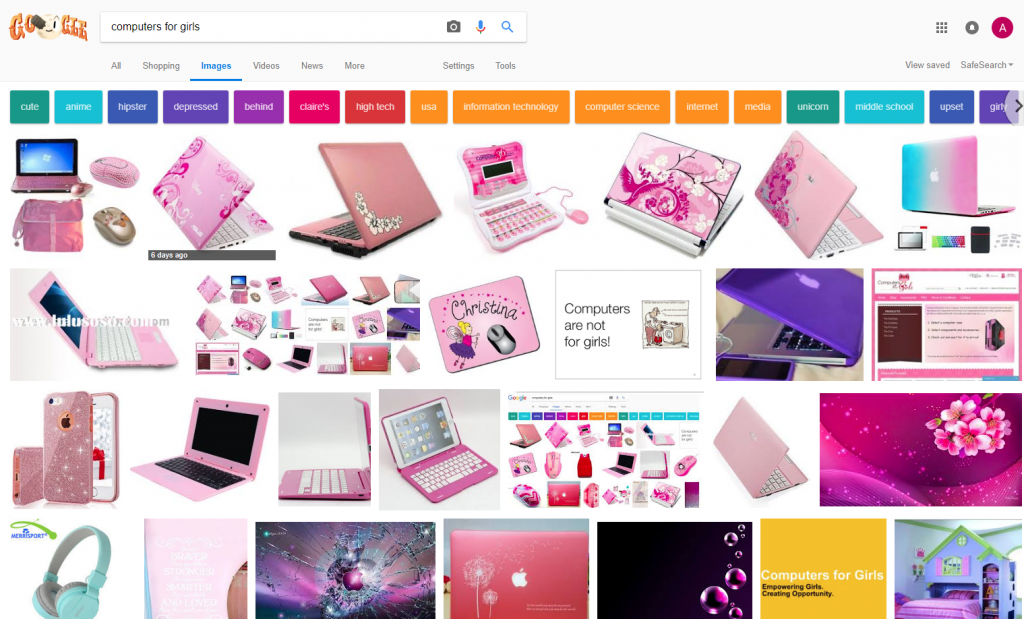

This isn't the first time Google's search algorithm has had an issue with the way it identifies images, back in 2015 Jacky Alciné pointed out Google Photos were classifying his black friends as “gorillas.”

But how does a search engine make these choices? To explain more I've included this video:

Given that the search engine results are based on the PageRank Algorithm, this means that for these images to appear people are linking more frequently to these stereotypical pink computers than to positive web pages such as Stemettes, Code First: Girls, Girls who Code and many other wonderful organizations which promote equality in the tech sector. Part of this might be that these websites don't have a lot of images available, but more likely it's to do with the entrenched gender stereotypes we see in society today.

We've tackled gender bias before in our work with the University of Zimbabwe, where it was found that although there was equal access, the percentage of male to female students using the university’s ICT labs was 87% male to 13% female (despite an almost equal enrolment of 51% women in comparison to 49% men). This was thought to be because of 'socialized' inequality, where female students felt they didn't have as much right to access the labs as the male students. We faced this societal issue by creating female-only labs, a space designed for the 51% of women enrolled at the university.

This allowed female students to gain basic ICT skills which would provide them with better employment opportunities in the future and more options for further study. By acknowledging the societal problem, and building it into our solution we sparked a change and challenged the view that ICT is the preserve of male students in Zimbabwe’s higher education system.